New case study: Driving Theory Test Preparation for ESOL Learners

Case Study · ESOL We ran a free, evening digital classroom to help refugees and migrants across the East of England pass the UK Driving

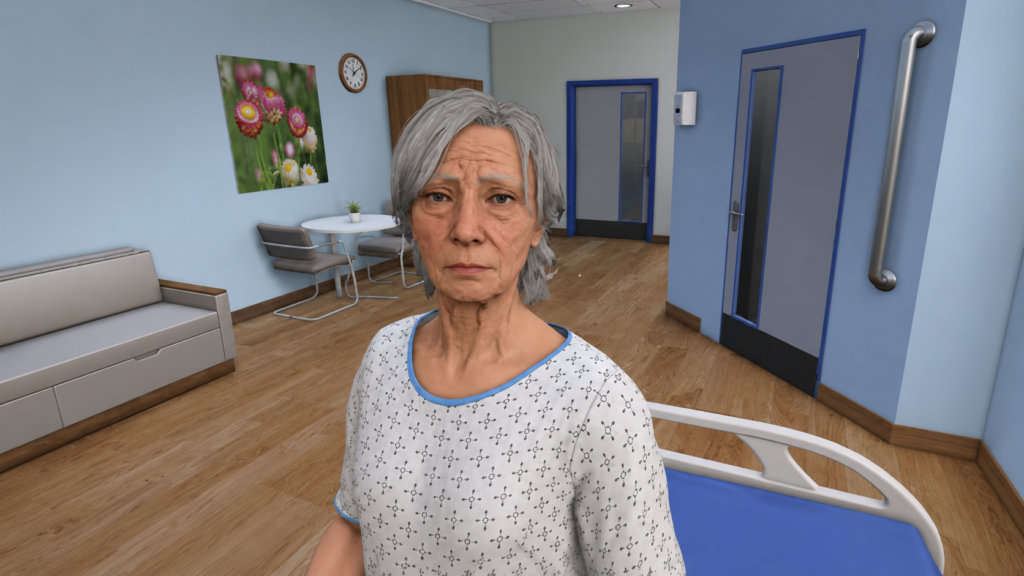

There is a familiar tension at the heart of clinical communication training: students need to practise high-stakes conversations, but the cost and logistical complexity of doing so with human actors, or standardised patients (SPs), has always placed a ceiling on how much practice any programme can realistically offer. AI-powered standardised patients are beginning to change that calculation, and the pace of development in 2025 and 2026 has been striking.

For medical English teachers, this is not a story about technology replacing human interaction. It is a story about a new kind of practice partner — one that is available at any hour, infinitely patient, endlessly scalable, and increasingly capable of generating the kind of authentic clinical language that students need to understand, respond to, and produce.

The case for AI standardised patients (AI-SPs) rests on a rapidly growing body of evidence. A 2026 randomised controlled trial published in Frontiers in Public Health evaluated AI-based virtual standardised patients against traditional SP training for clinical communication. The study found that AI-VSPs provided repeatable, accessible, and scalable training opportunities compared with traditional SP training, which is resource-intensive, time-consuming, and difficult to scale for large student cohorts (Sun et al., 2026).¹

A parallel strand of research has looked at what students actually want from these tools — and the findings are revealing. A study presented at the ACM CHI conference in Barcelona in April 2026 involved twelve clinical-year medical students in three co-design workshops, asking them to articulate their expectations of AI-SPs and to identify what was missing from current implementations. The researchers found that learning outcomes – not just conversational realism – drives learner trust, engagement, and educational value (Gao et al., 2026).² In other words, students are less concerned about whether the AI patient sounds exactly like a human being, and more concerned about whether the tool helps them learn something. This is a finding with direct implications for how language objectives can be built into AI-SP design.

The most striking clinical evidence comes from a randomised controlled trial of SOPHIE (Standardised Online Patient for Healthcare Interaction Education), an AI-powered virtual patient developed specifically for serious illness communication training. The trial, conducted from June to December 2024, compared SOPHIE training against a standard reading module. SOPHIE participants demonstrated significantly greater improvement in empathy, explicitness, and empowerment – the three core domains of serious illness communication – with effect sizes ranging from 0.59 to 0.92. SOPHIE participants also reported higher confidence in their communication skills (Haut et al., 2025).³

A UK-based mixed-methods study, conducted between November 2024 and March 2025 with 27 medical students from three universities, took a more accessible approach – using freely available ChatGPT-4o Advanced Voice Mode as a standardised patient. Pre- and post-session assessments found gains in students’ self-reported confidence in dealing with challenging patients, breaking bad news, and counselling anxious patients (Baseman et al., 2025).⁴

Each of these studies is primarily framed as a contribution to clinical education. But read through the lens of medical English and they point to something important: AI-SP interactions are, at their core, language events. The student who is learning to empathise with a patient in English, to use appropriate hedging language when discussing a poor prognosis, to manage the discourse of a difficult consultation – that student is doing language learning work, whether or not it is labelled as such.

This creates an opportunity and a responsibility for medical English specialists. If AI-SPs are going to be used at scale in medical and nursing education – and the evidence suggests they will – then language educators need to be involved in how the scenarios are written, what vocabulary and register the AI patient uses, how responses are assessed, and what feedback students receive on their linguistic choices. The rubrics that govern these assessments are not merely clinical tools: they are, in effect, language learning frameworks.

There is also a more immediate classroom application. A study published in December 2025 on LLM-based patient simulation for healthcare communication found that case-based learning using standardised patients is a key method for teaching communication skills, and that large language models show promise for creating scalable patient simulations that cover a broader diversity of patient demographics and clinical scenarios than human actors can realistically provide (Elhilali et al., 2025).⁵ For medical English teachers with access to AI tools, this opens the door to a new kind of classroom activity: scenario-based conversation practice in which the AI takes the patient role, the student responds in English, and the teacher focuses their feedback on the language dimension – register, accuracy, empathetic phrasing, and clarity.

None of this is straightforward. The research is clear that design matters enormously. An AI patient that is too passive fails to challenge students; one that is too unpredictable generates frustration rather than learning. The CHI 2026 study identified six learner-centred needs that AI-SP designers need to address, emphasising that a conceptual workflow grounded in instructional usability is essential to educational value (Gao et al., 2026).² Equally, the UK ChatGPT study found that while students valued the AI practice, its effectiveness was limited because it was not human – and recommended that briefing and debriefing sessions with a teacher are essential to frame and consolidate AI-mediated practice (Baseman et al., 2025).⁴

This is the equilibrium that medical English teachers are well positioned to establish: using AI tools to expand the volume and variety of communicative practice available to students, while preserving their own role as the human expert who coaches, contextualises, challenges, and deepens the learning that takes place in those interactions.

Case Study · ESOL We ran a free, evening digital classroom to help refugees and migrants across the East of England pass the UK Driving

Back to Menu ↩ n the OET Speaking test, you receive your role card and have exactly 3 minutes to prepare before the role play

Back to Menu ↩ One of the most important decisions you will make before your OET exam is whether to sit it on computer or